ABN AMRO Verzekeringen (AAV) is a Dutch insurer and intermediary offering services for consumers and business customers. Similar to many other organizations, their data domain was mainly IT-oriented, which meant that it had a big focus on tech delivery. Even though data coming from multiple source systems was made accessible with their newly implemented data virtualization platform, the tech focus meant that data had been less of a priority for business departments.

This led the leadership to wonder: “The data is there, but what can we do with it?”

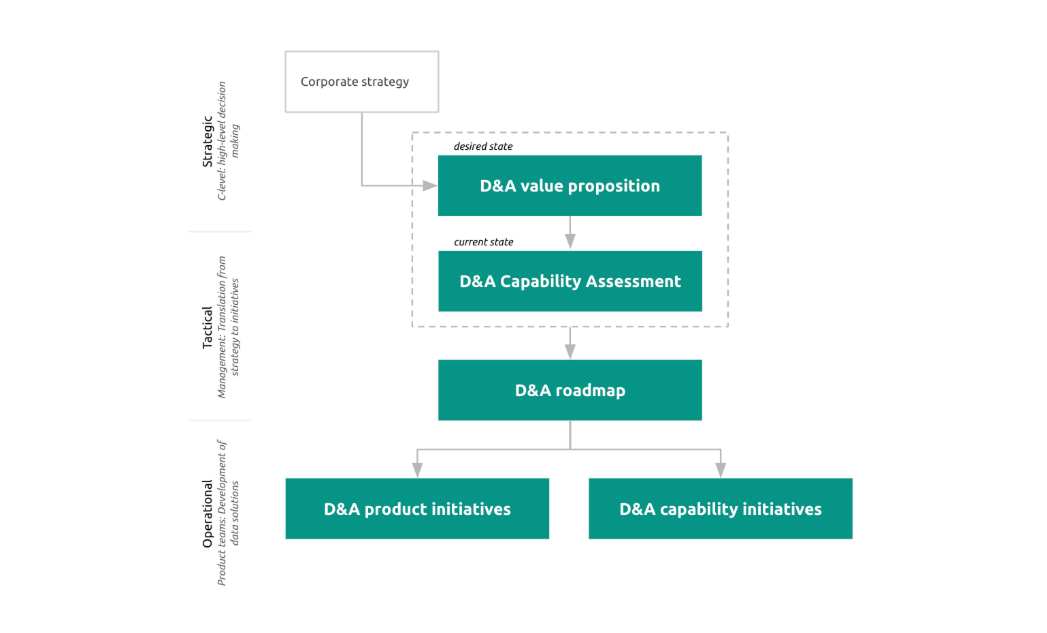

To address this challenge, Xomnia proposed to AAV an approach based on our Data & AI Strategy offering:

Step one: Determine the data & analytics (D&A) strategy by answering three main questions:

Step two: Develop data & analytics products.

Step three: Build data & analytics capabilities (team, organization, tools, sources).

Need help setting a data & AI strategy? Get in touch with our experts

The plan was sound, and the first phase went very smoothly. After just a couple months, the data & analytics strategy was ready, acting on business priorities while developing AAV’s data capabilities (people, organization, tech and data itself).

Everybody was thrilled. The next phase, execution of the D&A strategy, was the moment certain challenges started popping up.

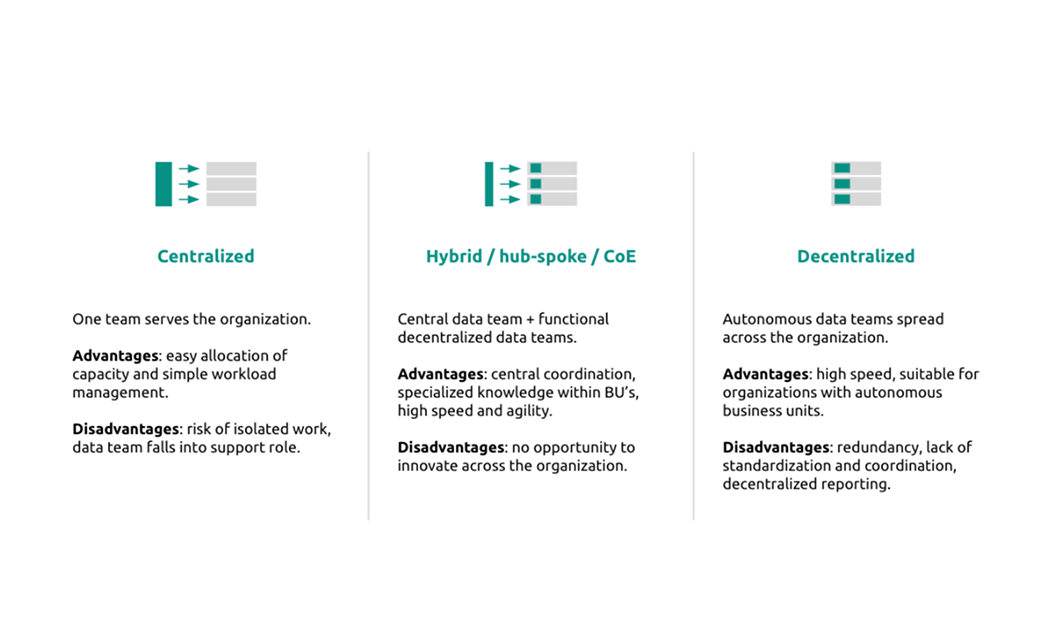

To build data products, you need data people. AAV already had talented data people, but they were spread across the organization. Such a decentralized model has its benefits: there’s a high level of autonomy, and ‘local’ data heroes can quickly react to changing needs of the business unit they’re part of.

A decentralized way of working also has its downsides: it often has a lower level of standardization and coordination (sometimes called siloes), meaning that there’s a risk of the wheel being reinvented over and over again. Additionally, allocating data professionals to business units means they had to become ‘jacks of all trades’: a little data engineering, some reporting and visualization, a sprinkle of advanced analytics, long-running analyses… Having local data professionals also meant that business departments didn’t really have the incentive to develop data analysis skills themselves. When an ad hoc data question arose, people looked at their 'data guy' for a quick answer, creating a bottleneck for insights that are relatively easy to gain.

The decentralized model generally fits companies that have autonomous (geographical) units, or a high level of data maturity (meaning data is a natural part of product development). Some excellent analyses and company examples on how to structure your data organization can be found here, here and here.

As mentioned earlier, the focus on tech delivery resulted in IT and business being somewhat separate worlds. This was illustrated during the first couple of Product Increment (PI) events that happened quarterly at AAV: application teams used tech talk to convey their platform plans to a business audience that was wondering when they would finally see some operational benefits. In addition, some important new business initiatives were not on the agenda, causing frustration and misaligned priorities.

This schism was also apparent in the domain of data governance. Business teams often moved on their own initiative when developing data products, and did not involve people like architects and security officers (who resided in the IT department) until a later stage.

During the creation of the D&A strategy, it became clear that some business ambitions could be supported by developing machine learning (ML) models - for example to generate product recommendations or predict churn. But where should these models be run?

AAV already had a data delivery platform, but it’s quite a step to also start implementing a data science platform to properly deploy ML models. Do the potential benefits justify such a big infra move? And which tool makes sense from an architectural standpoint?

Besides figuring out the infrastructure, ML model deployment itself involves a high level of complexity as well. Productionizing a model always has a system impact; there is usually a landscape of intertwined applications and platforms in which introducing something new may result in breaking something else. In AAV’s case, deploying a recommendation engine meant that the data science platform needed to access the data (virtualization) platform and write its output to the CRM. Otherwise, the model would not be be actually operational and useful. Because of these interacting apps/platforms and the fact that multiple teams owned parts of the system, priorities and ownership needed to be carefully negotiated.

Determining what might be a good way to use data sources, which includes reporting, predicting and automating, meant that being able to access certain data sources via the data platform had to be prioritized over others. This conflicted with the planning of the data engineering teams responsible for building the datasets (part of the separation between business and IT described above). It also exposed that it was undecided whether reporting / modeling should be done using operational systems or a centralized data platform.

Finally, high expectations about the ‘monetization of data’ produced some impatience along the way. Some stakeholders were eager: “We understand the potential value, but when are we going to see some actual results?” While it is understandable that proving the value of data science involves certain steps (hypothesis, business case, backtest or ‘retrodiction’, field test or experiment), business stakeholders wanted quick wins as well.

Struggling with a difficult data project? Here's a treatment plan for you

Certain circumstances were optimal for AAV’s transformational journey. For a start, there was sponsorship at the right level. The CCO and CIO jointly initiated the project, which added weight and made sure both the business and IT departments were on board. Another crucial aspect was mandate: Xomnia was trusted with the means (time, budget and decision-making power) required to transform things. It also really helped that the involved teams and stakeholders - with no exceptions - showed openness, eagerness, and enthusiasm during the one-and-a-half year journey.

Last but not least, the partnership between AAV & Xomnia had a clearly defined end-state: Xomnia’s primary goal was to make itself redundant. At the end of the journey, AAV no longer needed Xomnia’s services because all of the knowledge and capacity had been built up within the company.

To address the challenges of AAV’s decentralized data organization, we introduced a hybrid (or hub-and-spoke) model. With it came new, multidisciplinary teams combining (data & ML) engineering, reporting and business profiles (analysts & product owners (PO’s)). This allowed the teams to take ownership of the data products they developed, while ensuring both deeper specialization and better mobility between business areas. Consequently, multidisciplinary teams had a positive effect on data-business integration.

To further increase the level of data literacy across different departments, we initiated the move towards self-service BI. This allowed business users to do their own analyses, freeing up data team capacity that can be used to focus on structural work instead of ad hoc questions.

These first steps towards a data-driven organization needed a tangible stage, so a data community was started for developers and users to showcase work, exchange ideas, and share knowledge. AAV’s growing data culture also attracted new talent - something interesting was brewing! Being able to fulfill tech positions in a market full of ‘war on talent’ and the move towards freelancing is a testament of AAV as a very attractive place to work.

To truly anchor data products in the heart of AAV, Agile was further embraced and business teams were involved in every stage of the development process, including design, feature engineering, and testing. Each sprint review drew a larger crowd, with the dev team demo-ing the impact their progress had made. This also meant being open about things that went wrong or didn’t work, and building trust along the way. Short dev cycles paired with active feedback ensured that the teams stayed on the right track.

Besides business stakeholders, data governance stakeholders (teaming up in the newly instated Data Office) were also involved in each development stage. Use case canvases and potential solutions were subjected to a ‘roast’ to prevent brake-pulling in later phases. Interactions and decisions about architecture, security, data quality, risk, privacy and ethics were documented and frequently updated.

Being a joint venture of ABN AMRO and Nationale Nederlanden meant that AAV wasn’t alone in figuring out which data science platform would be a proper fit. Ample experience with several platform providers existed in the ecosystem of companies, with many architects happy to advise. When it was decided that a certain tool was to be implemented, ML engineers collaborated with cloud platform engineers to configure and launch it in record time.

To battle deployment complexity, data squads this time teamed up with the CRM squad and solution architect to share timelines (priorities were already set during the PI event) and jointly design and integrate the solution across platforms.

Behind the tug-of-war over which data source to deploy first was a deeper issue of data engineers being part of the IT teams developing the policy administration application. This could lead to them just performing migration tasks (saves IT costs in the long run, but has no direct business value) without extracting / loading / transforming new data sources which (has direct business value).

Instead of this, it was decided to have data engineers join the data teams, which meant that actual end-to-end data products could be developed by deploying data sources in line with the prioritized use cases. In the meantime, data sources already available were leveraged to knock off long-standing business requests for insights, like operational KPIs, sales funnels, campaign effectiveness.

As mentioned, the first ML models did take a while to develop (and test), which tried the business’ patience. However, with the company discovering what is possible with AI, additional requests for automating operations (processing calls, handling claims, analyzing complaints) came in. As the data teams learned to work more effectively, development time for these models was decimated compared to earlier work.

At the end of the journey, AAV had data teams producing data products that business departments used to achieve real commercial and operational impact. Data is now a natural part of the company’s day-to-day business. Data is cool!

With this new level of data maturity, data PO’s collectively concluded that there was no real need for an updated data strategy. The original data strategy performed its duty by playing into existing challenges, focusing effort, and guiding transformation - but now came the time to look further ahead.

The client chose to explore an innovative product vision approach: creating a north star per data domain should inspire stakeholders during quarterly PI events to set even bolder ambitions and continuously drive commercial impact with data.